Hooking up DynamoDB using Quarkus

Quarkus is proving to be very practical and building stuff fast. Its a shame the current support only uses the latest experimental AWS drivers.

"There has been a revolution; organizations must become real time; to become real time, they must become event driven." Journey to event driven - Part 1

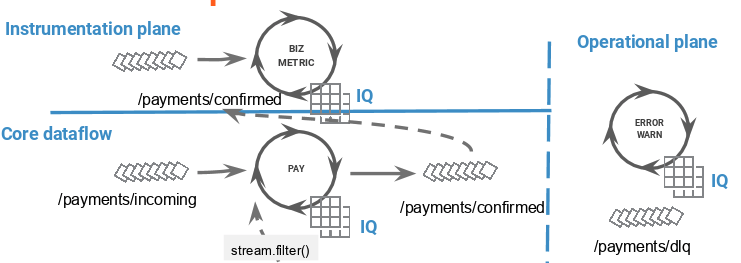

Streaming architectures are different. Whether building data-pipelines of event-driven microsevices, they all require a dataflow model to be constructed using sound principles that separate the core flow from the observability, operations and control planes. This 2 day session covers: technology strategy & selection, solution architecture, Kafka architecture, high-level data flow modelling, DevOps and automation. (tailor using services below)

Tooling of streaming platforms is different. We can be deployed to plan, adopt and implement technology strategy; including Cloud, automation, DevOps, build, application framework (Quarkus, Micronaut), distributed logging and CI/CD pipelines. (tailor using services below)

PoC implementation and planning sessions for Event Storming, Domain driven design, data flow, operations and modelling. Additionally, technical design for data centric functions such as data-governance, data-lineage, data-classification, events-as-an-API and more. (tailor using services below)

Let us help you build new or replatform existing applications to use the latest serverless technologies on AWS; AWS Lambda, DynamoDB, Quarkus and CloudEvents

We are experts with comprehensive knowledge of Confluent's Streaming platform. This includes deployment planning, technology selection and scaling. Some activities may include Confluent PS consultants as part of our partnership arrangement with them.

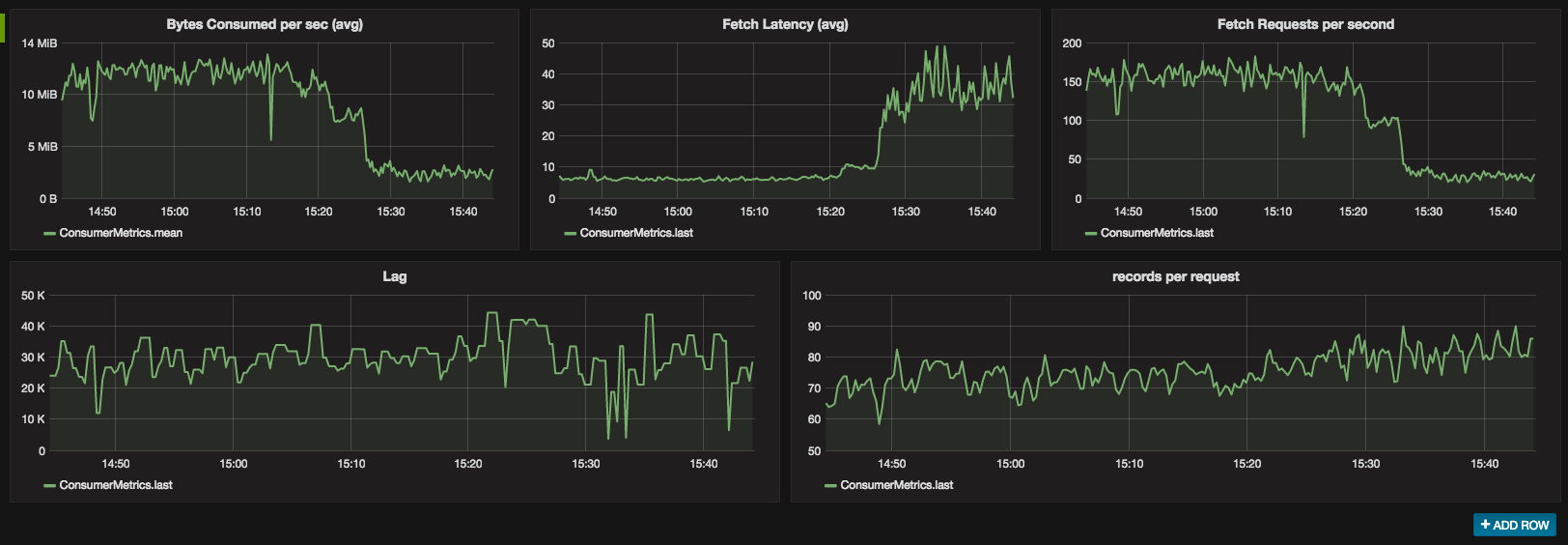

We also provide design and architecture services for Open Source Apache Kafka variants; including pure Apache Kafka, Confluent Community and others. Includes Kafka core, Kafka Connect and Kafka Streams as well as open source monitoring with Grafana and others.

We work with you to evaluate alternatives of Open-Source versus Vendor based solutions. Including core technology and surrounding tools to map to the solution architecture while capturing development and operational concerns.

Covering event-driven-design, Kafka, central nervous system, event modeling, operations, DevOps, CI/CD and multi-cloud to build a comprehensive development plan and blue-print; we will work with you to tailor the content

We scope, design and agree the overall plan while delivering on-site or remotely. This accelerator helps for common scenarios such as data pipelines, event-driven microservices or a central nervous system. More advanced PoCs include stream processing shoot-outs, technology selection (i.e. Quarkus, Graalvm) and tooling.

Event storming, domain driven design to capture data flows of domain and technical events. Define events as APIS and meet operational concerns for data evolution and observability.

Capture and design Operational concerns including deployment, upgrade, recovery. Implementation will include deadletter queues, log aggregation, filtering and alerting using Kafka Streams and other tooling.

Learn how to use the latest tools and techniques with Kafka; replay production incidents for regression scenario analysis, feature flag patterns, zero-down-time deployment

Quarkus is proving to be very practical and building stuff fast. Its a shame the current support only uses the latest experimental AWS drivers.

Uploading to S3 is now working... the next step is to wire in DynamoDB for the metastore

Java development is now become meta based. The use of annotation based magic is overwhelming; but terrifyingly simple.